[ad_1]

Opinions expressed by Entrepreneur contributors are their own.

Ethical artificial intelligence is trending this year, and thanks to editorials like a16z’s techno-optimist manifesto, every company, big and small, is seeking ways to do it. Both Adobe and Getty Images, for example, released ethical and commercially safe models that stick to their own licensed images. Adobe also recently introduced a watermark to let users know how much AI was used in any given image.

Still, companies received backlash on social media over using generative AI in their work. Disney faced uproar twice this year over its usage of AI in Marvel’s Secret Invasion and Loki season two. The use of undisclosed AI also turned an indie book cover contest into a heated controversy, forcing the author running it to stop the contest entirely moving forward.

The stakes are high — McKinsey & Company estimates generative AI will add up to $4 trillion annually to the global economy across all industry sectors. I increasingly speak with clients interested in generative AI solutions across social media, marketing, search engine optimization and public relations.

Nobody wants to be left behind, but it must be done ethically to succeed.

Related: AI Isn’t Evil–But Entrepreneurs Need to Keep Ethics in Mind As They Implement It

Ethical concerns of using AI

AI has a lot of promise, but it also comes with ethical risks. These concerns must be taken seriously because Millennials and Gen Z especially favor ethical brands, with up to 80% of those surveyed saying they’re likely to base their purchasing decisions on a brand’s purpose. Of course, using AI ethically is easier said than done–Amazon learned this the hard way.

Amazon implemented an AI-powered hiring algorithm in 2014 to automate the hiring process. The system was built to ignore federally protected categories like sex, but it still taught itself to favor men. Because it relied on historical hiring data, it penalized applications that included women’s colleges, clubs and degree programs.

It highlights how historical biases can still impact us today, and the tech industry still has a long way to go toward being inclusive. Amazon reinstated its hiring bot as tech layoffs disproportionately impact women, and this is just one example of AI bias – they can easily discriminate against any marginalized group if not properly developed at every step of the development, implementation, and execution.

Still, some businesses are finding ways to navigate this ethical minefield.

Related: How Women Can Beat the Odds in the Tech Industry

Doing AI the right way

Being a first mover comes with risk, especially in today’s world of “moving fast and breaking things.” Beyond bias, there are also questions about the legality of current generative AI models. AI leaders OpenAI, Stability AI and MidJourney attracted lawsuits from authors, developers and artists, and incumbent partners like Microsoft and DeviantArt got caught in the crossfire.

This fueled an atmosphere where creative professionals on social media are divided into two camps: pro- and anti-AI. Artists organized “No AI” protests on both DeviantArt and ArtStation, and artists are fleeing Twitter/X for Bluesky and Threads after Elon Musk’s controversial AI training policy was implemented in September.

Many companies are afraid of mentioning AI, while others dove headfirst into the fray by testing projects like Disney’s Toy Story x NFL mashup and that Year 3000 AI-generated Coca-Cola flavor that could go down in history as the new New Coke based on reviews from taste testers (although it did get a win from its AI-generated commercial).

In fact, Disney was widely praised for the Toy Story football game, and some platforms are finding ways to empower their users.

Related: How Can You Tell If AI Is Being Used Ethically? Here Are 3 Things to Look for

Optim-AI-zing for ethics

Today, building ethical AI is a top priority for businesses and consumers alike, with large enterprises like Walmart and Meta setting policies to ensure responsible AI usage companywide. Meanwhile, startups like Anthropic and Jada AI are also focused on using ethical AI for the good of humanity. Here is how to use AI ethically.

1. Use an ethically sourced AI model

Not every AI is trained equally, and the bulk of legal concerns revolve around unlicensed IP. Be sure to perform due diligence on your AI and data vendors to avoid trouble. This includes verifying the data is properly licensed and asking about what steps were taken to ensure equity and diversity.

2. Be transparent

Honesty is the best policy, and it’s important to be transparent about whether you’re using AI. Some people won’t like the truth, but even more will hate that you lied. The White House Executive Order on AI sets forth standards on properly labeling the origin of any creative work, and it’s a good habit to get into so people know what they’re getting.

3. Keep humans in the loop

No matter how well it’s trained, AI can inevitably go off the rails. It makes mistakes, and it’s important to involve humans at every stage of the process. Understand that ethical AI is not a “set it and forget it” thing – it’s a process that should be carefully executed throughout the workflow.

The legal actions against AI are still pending, and global governments are still debating how to handle it. What’s legal today may not be next year after the dust settles, but these tips will ensure you’re using it as safely as possible and setting the right example.

[ad_2]

Source link

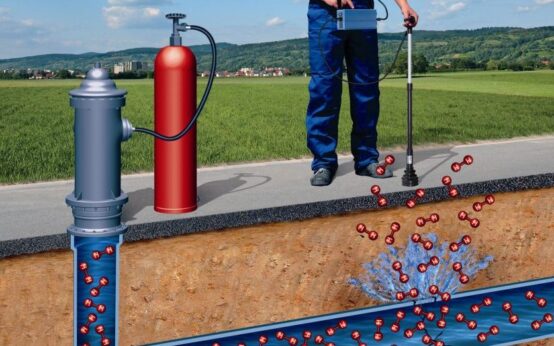

Best Underground Water Leak Detection Equipment 2024

Best Underground Water Leak Detection Equipment 2024  Best Backyard Ideas: Turn Your Outdoor Area Into a Creative and Calm Haven

Best Backyard Ideas: Turn Your Outdoor Area Into a Creative and Calm Haven  Babar, Rizwan are good players but not whole team, says Mohammad Hafeez

Babar, Rizwan are good players but not whole team, says Mohammad Hafeez  Pak vs NZ: Green Shirts aim to bounce back against Kiwis today

Pak vs NZ: Green Shirts aim to bounce back against Kiwis today